CS607 GDB 1 Solution and discussion

-

GDB :

Machine learning algorithms are helpful in solving the complex problems. Furthermore, in Machine learning it is impossible to exhaustively search over the entire concept space i.e. used for representing the problem with respect to some given attributes. Secondly during training process learner has to hypothesize to match the output that best fits the true output of the concept space.

GDB Topic:

Discuss and compare that which algorithm is best suitable for either general to specific or specific to general ordering of hypothesis space.

-

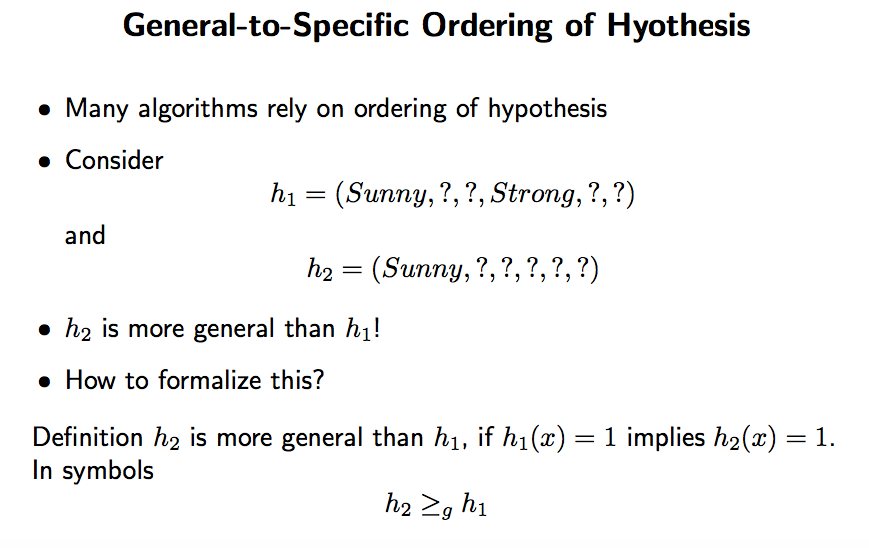

General-to-Specific Ordering of Hyothesis

• ≥

g does not depend on the concept to be learned

• It defines a partial order over the set of hypotheses

• strictly-more-general than: >

g

• more-specific-than

≤

g

• Basis for the learning algorithms presented in the following!

• Find-S:

– Start with most specific hypothesis

(

∅, ∅, ∅, ∅, ∅, ∅

)

– Generalize if positive example is not covered! -

In general, machine learning algorithms apply In general, machine learning algorithms apply some optimization algorithm to find a good some optimization algorithm to find a good hypothesis. In this case, hypothesis. In this case,

J is piecewise piecewise

constant constant, which makes this a difficult problem , which makes this a difficult problemThe maximum likelihood estimate Direct Computation. The maximum likelihood estimate

of P(x,y) can be computed from the data without search. ) can be computed from the data without search.

However, inverting the However, inverting the Σ matrix requires O(n matrix requires O(n3) time. -

GDB: Fall-2019

In machine-learning problem space can be represented through concept space, instance space version space and hypothesis space. These problem spaces used the conjunctive space and is very restrictive one and also in the above-mentioned representations of problem spaces, it is not sure that the true concept lies within conjunctive space.

GDB Topic:

Discuss the case if we have a bigger search space and want to overcome the restrictive nature of conjunctive space, then how can we represent our problem space. Secondly in a given scenario which algorithm is used for our problem space to represent the learning problem.